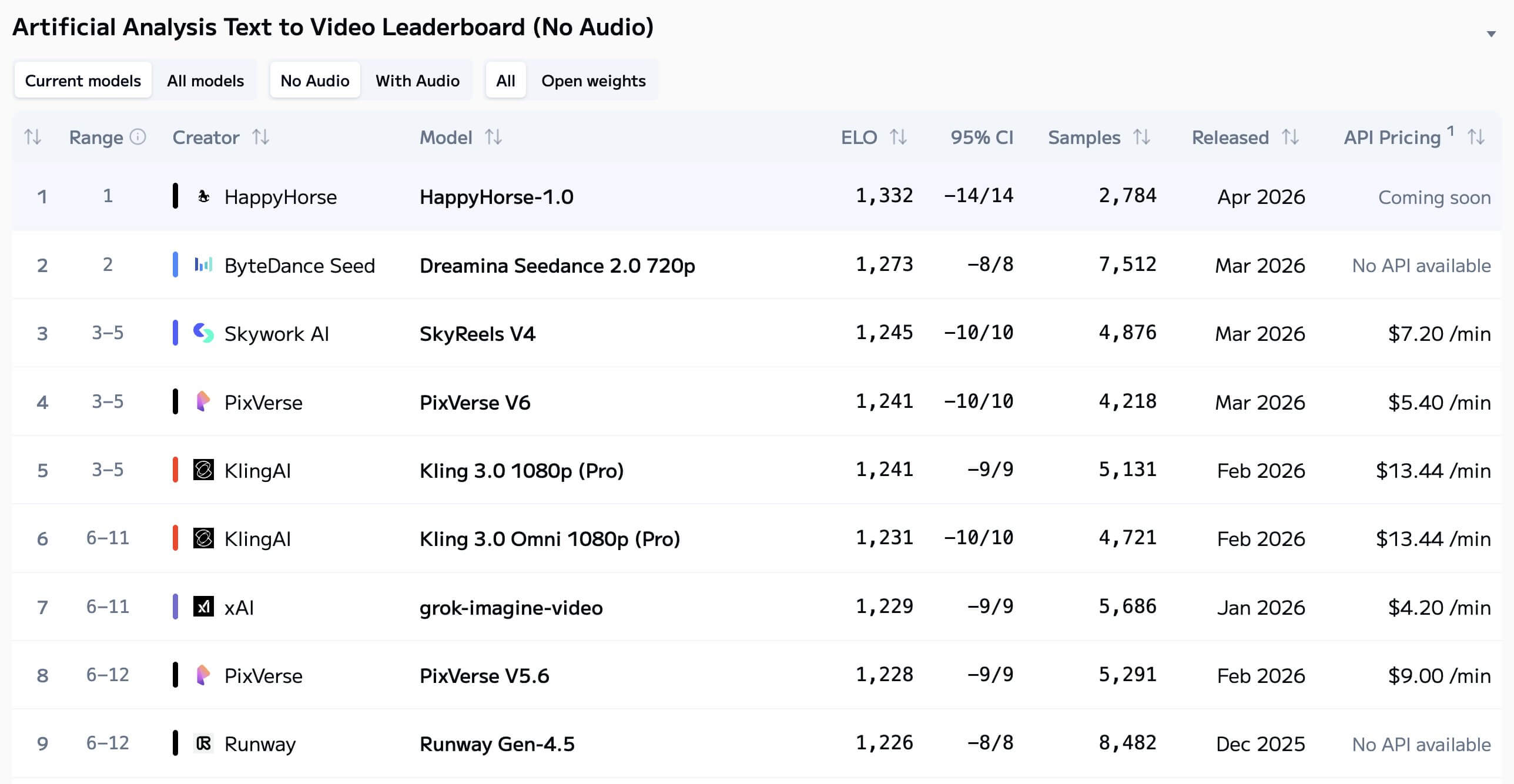

Just a sudden announcement from Artificial Analysis captured the AI industry's attention. This independent firm—well think of them as the 'Consumer Reports' of AI models—revealed that an unknown model called HappyHorse-1.0 had topped their performance charts, surpassing the well-established Seedance 2.0. As one of Seedance 2.0's providers, instead of defensively dismissing this dark horse competitor, we decided to take a closer look with genuine curiosity and industry expertise.

What is HappyHorse?

HappyHorse (HappyHorse-1.0) is a cutting-edge AI video generation model released on April 8, 2026 that specializes in both text-to-video (T2V) and image-to-video (I2V) generation, with the unique capability to jointly generate synchronized video and audio from text prompts. According to Artificial Analysis' data, it landed in the #1 spot for Text and Image to Video (No Audio) and the #2 spot for Text and Image to Video (With Audio).

| Key Feature | Description |

|---|---|

| Multi-modal capabilities | Supports both text-to-video and image-to-video transformations, bringing static images to life with fluid motion |

| Integrated audio generation | Creates complete video clips with sound design, narration, and ambient audio simultaneously from a single text input |

| Cinematic quality | Delivers exceptional motion quality, prompt following precision, and visual consistency across longer video sequences |

| Minimalist architecture | Uses a single 40-layer Transformer design (no cross-attention) that processes text, video, and audio uniformly |

| Performance advantages | Has lower inference costs (approximately 50% of Seedance 2.0) |

| Open source | No official access now |

Verified Facts

Ranking Performance is Legitimate

- Based on blind Elo ratings: Data from Artificial Analysis with no manipulation, self-reported data, or audio generation bias

- Blind voting mechanism: Users compare two videos generated from the same prompt without knowing which model created them, then vote for their preference

- Elo scoring system: Eliminates lab-reported bias and cherry-picked samples

Technical Claims Are Documented

- Architecture: 40-layer single self-attention Transformer with 15 billion parameters

- Capabilities: Supports multilingual audio-video joint generation with unified Text-to-Video (T2V) and Image-to-Video (I2V) pipelines

- Design: Pure self-attention without cross-attention—first 4 and last 4 layers are modality-specific projections, middle 32 layers share parameters across all modalities

No Public Availability

- Status: GitHub and HuggingFace both show "Coming Soon" with no public weights

- Access: No API access, pricing, or service guarantees available

- Current state: As of April 8, 2026, all website links lead to placeholder pages with no functional resources

Unverified Claims

Development Team

- Speculation: Points to Asian tech giants (Alibaba, ByteDance) or Western labs (Google Veo, xAI Grok)

- Confirmation: No official announcement exists; anonymous submission is confirmed

Technical Specifications

- Parameter count: The 15 billion parameter claim is single-source without independent verification

- Inference speed: Performance metrics lack third-party validation

Open Source Promise

- Claim: Website states full open-source release planned

- Reality: Zero accessible resources exist, creating a contradiction between promise and availability

Ultimate Review: HappyHorse 1.0 Vs Seedance 2.0

| Comparison Dimension | HappyHorse-1.0 | Seedance 2.0 | Key Differences | Analysis Summary |

|---|---|---|---|---|

| Video Generation (No Audio) | Elo 1333/1392, leading in frame fluidity and detail restoration | Elo 1273/1355, stable video quality | HappyHorse shows superior visual performance without audio | HappyHorse excels in pure visual generation, though margin is modest |

| Audio-Video Synchronization | 2nd place Elo with audio, lip sync not publicly tested | Frame-level audio-visual sync, phoneme-level lip alignment in 8 languages | Seedance 2.0 leads industry in audio capabilities | Seedance demonstrates clear technical advantage in audio integration |

| Technical Architecture | Single Transformer with self-attention, no cross-attention | Dual-branch diffusion Transformer with parallel visual-audio modeling | Seedance has more mature audio generation architecture | Seedance's dual-branch design enables sophisticated multimodal processing |

| Commercial Availability | No API, no weights, no service guarantees | Platform accessible, open ecosystem in planning | Seedance is deployable; HappyHorse is leaderboard-only | Seedance offers practical usability while HappyHorse remains research-focused |

| Scene Capabilities | Excels at single-shot static scenes | Native multi-shot narrative, cross-frame character consistency | Seedance better suited for content creation | Seedance provides production-ready features for complex storytelling |

| Sample Stability | Limited samples for new model, high Elo volatility | Over 7,500 valid samples, stable ratings | Seedance results are more reliable | Seedance's extensive testing provides statistically robust performance data |

Same Prompt Comparison: Real Generation Differences Between Both Models

Prompt 1:

A hula hoop spinning on a kid's waist, gradually climbing to their chest, then dropping to knees, then clattering to the floor. They pick it up to try again.

Prompt 2:

A golf ball in a cup rolling around the rim three times before finally dropping in. The golfer's body language matches each rotation. Audio: Ball rattle, exhale, plop.

Prompt 3:

A cat staring at its own reflection in a toaster, paw tapping the chrome surface. The distorted cat reflection taps back. Audio: Paw taps, confused meow.

Prompt 4:

A barista creating latte art by pouring steamed milk into espresso. The white milk submerges beneath the brown crema initially, then breaks through the surface as the cup fills. The barista's wrist makes precise oscillating movements, creating a rosetta pattern. The milk and espresso maintain their distinct colors while interacting at the boundary. Audio: The gentle pour of liquid, the hiss of the steam wand in the background.

Source: Original Comparison Analysis

My Summary:

| Comparison Aspect | HappyHorse | Seedance 2.0 |

|---|---|---|

| Visual Quality | Superior detail rendering in static scenes with refined textures; excels at small, subtle movements with natural motion flow | Strong overall stability with minimal artifacts; slightly less detailed in close-up textures |

| Audio-Visual Synchronization | Audio integration still in development; may require manual adjustment in post-production for precise sync | Frame-accurate audio-visual alignment out of the box; ideal for projects with tight production timelines |

| Creative Flexibility | Excellent for single-shot artistic pieces and experimental content; offers more granular control over individual scenes | Streamlined multi-shot workflow with automated transitions; better suited for narrative-driven content but less customizable per scene |

| Motion & Physics | Performs best in controlled, low-motion scenarios; occasional clipping in complex dynamic scenes | Handles large-scale motion and physical interactions more realistically; may sacrifice some fine detail for motion consistency |

| Best Use Cases | Art projects, product showcases, portrait videos, content requiring high visual fidelity | Short-form social media, commercial ads, narrative shorts requiring quick turnaround and audio sync |

Why Mystery HappyHorse Create Problems?

The HappyHorse phenomenon exposes a critical issue: without verified attribution, we can't assess whether benchmark results reflect genuine capability or test optimization. More critically, when problems arise—harmful outputs, unexpected behaviors, business issues—there's no accountability chain and no recourse for users. This lack of transparency makes it impossible to verify claims or ensure reliability in real-world applications.

The Builder's Dilemma

Mystery models may represent genuine technical breakthroughs and serve as valuable "proof of possibility," pushing established players to innovate faster. But for teams building actual products, a model without APIs, licensing terms, or support has near-zero practical value. You can't build roadmaps around it, explain it to investors, or integrate it into products that require compliance and stability.

The Strategic Reality

AI is increasingly starting to feel like it is splitting into two parallel tracks. One is busy pushing benchmark scores higher and creating the sense of amazement around “mystery models”; the other has to answer a much more practical question: can these models actually be deployed in a stable way, who is responsible when things go wrong, and can the team provide long-term support? To be honest, the former does bring technical excitement and can offer some sense of direction for the industry, but for those who are truly building products and driving commercialization, it is more important to focus on a few practical dimensions: whether the generation quality is stable enough, whether the deployment capability is strong enough, whether service support is reliable, and whether the ecosystem is open enough. Since launching in March, Seedance 2.0 has already supported more than 100,000 creators' commercial projects. The value brought by this kind of real usage and delivery scenario cannot be replaced by rankings alone.

Try Seedance 2.0How Should Creators Choose Between HappyHorse and Seedance 2.0?

| Use Case | HappyHorse-1.0 | Seedance 2.0 |

|---|---|---|

| Visual Short-Form Content | Worth monitoring, not yet accessible | Available now |

| Audio-Integrated Content | Limited audio capabilities | Frame-level audio-visual sync |

| Enterprise Commercial Use | No official support or API | Platform accessible with planned ecosystem |

| Technical Research | Awaiting potential weight release | Gradual research access planned |

| Immediate Production Needs | Not currently available | Ready to use, no waitlist |

FAQs for HappyHorse

1Who made HappyHorse-1.0?

At this point, no official source has clearly identified the team behind HappyHorse-1.0. There is community speculation, but no confirmed developer or organization has publicly claimed it yet.

2Is HappyHorse-1.0 available to use right now?

There does not appear to be a verified, production-ready way to access HappyHorse-1.0 right now. Public information is still limited, so availability, access methods, and release status remain unclear.

3Are HappyHorse websites safe to use?

Since there is no confirmed official HappyHorse website at the moment, it is best to be cautious with any site using that name. This is especially important if the site asks for payment, subscription, or account registration.

4Is HappyHorse-1.0 free or open-source?

That is still unclear. We have not seen an official source confirming public weights, open-source availability, or a verified free access path, so it is safer not to assume that it is fully open yet.

5Is HappyHorse-1.0 the same as WAN 2.7?

There has been some discussion around that idea, but nothing has been confirmed. For now, it is better to treat HappyHorse-1.0 and WAN 2.7 as separate unless an official source says otherwise.

6How does Artificial Analysis rank video models?

Artificial Analysis uses blind user voting. People compare outputs from the same prompt without seeing which model made them, and those preferences are then converted into Elo-style rankings.

7Can HappyHorse generate long-form video?

It is not clear yet whether HappyHorse supports long-form video in a usable public product. If you want to explore longer video workflows today, you can try long video features on our Novi AI and also experience Seedance 2.0.

8How should I interpret HappyHorse-1.0’s leaderboard performance?

The rankings are interesting and worth watching, but leaderboard results are only one part of the picture. For real use, it still helps to look at access, reliability, support, and whether the product is actually ready to use.

9When will HappyHorse-1.0 be released?

There is no confirmed public timeline yet. Until there is a clear official update, it is best to treat the release timing, access details, and availability claims with a bit of caution.

Conclusion

HappyHorse-1.0's rise to the top demonstrates meaningful progress in AI video technology, while reminding the industry of an important distinction: leaderboard rankings provide valuable benchmarks, but practical applicability ultimately determines real-world impact.

Was this page helpful?

![Best Horror Animes to Watch[2026]: Top 20 Supernatural Picks](https://images.noviai.ai/noviaien/assets/article/video-tips/best-horror-anime.png)