Closed-source large language models have undergone several iterations over the past few months, but they've also experienced information leakage issues—a real concern. While you can continue using ChatGPT as usual, you might want to explore whether you can run LLMs and perform tasks locally instead. Additionally, the same GPU that powers cutting-edge LLMs can also run AI video generation models, meaning your GPU could unlock even more possibilities.

This guide cuts through the technical jargon to provide a clear, straightforward path for both local LLM deployment and local text-to-video generation. You'll learn how to evaluate your current graphics card (GPU), understand what kinds of AI models (both LLMs and video models) it can handle, and ultimately choose the right setup to get the best performance for your budget. Our goal is to make running private AI (LLMs) and creating AI-generated videos simple, putting you back in control of your data and creative workflow.

Understanding GPU Requirements for LLMs & Video Models

Before diving into specific GPU recommendations, it's critical to understand the core metrics that determine how well a GPU can run LLMs and AI video models. These metrics will guide every hardware decision you make.

VRAM (Video RAM)

VRAM is the GPU's dedicated memory—the workspace where models (LLMs or video models) reside during inference. For both use cases, VRAM is non-negotiable: the model must fit in VRAM to run smoothly; relying on system RAM will severely degrade performance (especially for video generation, which is more VRAM-intensive).

Rule of thumb: Roughly 2 GB of VRAM per billion parameters for LLMs at FP16 precision. For open-source AI video models (e.g., Wan2.2, Stable Video Diffusion), VRAM requirements are clearly defined based on model variants: Wan2.2 only needs 8GB VRAM for basic 480P generation, while Stable Video Diffusion (SVD) requires 16GB+ VRAM, and its SVD-XT variant needs 20GB+ VRAM for higher frame rates and resolution.

- A 7B model needs ~14 GB (FP16)

- A 13B model needs ~26 GB (FP16)

- Entry-level AI video (Wan2.2, 480P short clips) needs 8-12 GB VRAM

- Mid-level AI video (Wan2.2 Enhanced、SVD Basic, 720P short clips) needs 16-24 GB VRAM

- High-end AI video (Wan2.2 Advanced、SVD-XT, 1080P+ long clips) needs 24+ GB VRAM

Quantization (below) can reduce LLM VRAM requirements substantially; for video models, quantization has minimal impact, so VRAM capacity is even more critical here—especially for SVD-XT, which demands higher VRAM for 25-frame video generation.

Memory Bandwidth

Memory bandwidth (measured in GB/s) is how quickly a GPU can move data within VRAM. It directly affects:

LLMs: Token generation speed (how responsive conversations feel)

AI Video Models: Rendering speed (how fast clips generate)

A GPU with ample VRAM but low bandwidth may load models, but it will be slow—frustrating for interactive LLM use and time-consuming for video generation. Older GPUs with generous VRAM can still perform well if they keep the full model (and KV cache for LLMs) on-GPU, avoiding CPU offload that negates advantages.

However, older GPUs with generous VRAM can still perform well, as they keep both the full model and KV cache on-GPU—avoiding CPU offload that would negate bandwidth or architectural advantages.

Note

Modern GPUs like the RTX 4090 exceed 1000 GB/s; older or budget cards may offer 400–600 GB/s. For interactive use, aim for ≥600 GB/s to keep conversations fluid.

Quantization

Quantization reduces weight precision (e.g., FP16 → INT8/INT4), shrinking the VRAM footprint for LLMs by 2–4×—often with minimal quality loss for typical use. This lets you run larger LLMs on the same hardware. For AI video models, quantization has limited benefit (video generation relies more on raw VRAM and bandwidth), so focus on VRAM capacity here.

Example: A 13B LLM that needs ~26 GB at FP16 can run in 8–10 GB when quantized to 4-bit. For video models like Wan2.2 and SVD, quantization may reduce output quality (e.g., blurriness in 480P clips), so prioritize VRAM over quantization.

VRAM Requirements by Model Size

| Model Size | FP16 (Full Precision) | INT8 (8-bit Quantized) | INT4 (4-bit Quantized) | Minimum GPU |

|---|---|---|---|---|

| 7B | 14GB | 7GB | 3.5GB | RTX 3060 12GB |

| 7B | 14GB | 7GB | 3.5GB | RTX 3060 12GB |

| 13B | 26GB | 13GB | 6.5GB | RTX 3080 10GB (INT4) |

| 30B | 60GB | 30GB | 15GB | RTX 4090 24GB (INT4) |

| 70B | 140GB | 70GB | 35GB | RTX 6000 Ada 48 GB(single GPU), 2× RTX 3090 with NVLink (INT4) |

2026 Top GPU Picks: Prioritized for LLMs & AI Video Generation

Now that you understand the key metrics, here are our specific GPU recommendations for every budget and use case—prioritized for both LLM performance and AI video generation compatibility.

1 Best Consumer Picks: NVIDIA GPUs

NVIDIA's CUDA platform has become the industry standard for AI workloads, offering unmatched software support and optimization. Every major LLM framework—from PyTorch to TensorFlow—is built with CUDA in mind, making NVIDIA GPUs the path of least resistance for local deployment.

NVIDIA RTX 4090

- Pros: Still a powerhouse with 24GB of VRAM, now serving as the high-end alternative to the 5090. It offers excellent performance for most local models and provides a better value proposition than the 5090 for users who don't strictly need the extra 8GB of memory. You can comfortably run quantized 30B models or experiment with 70B models.

- Cons: Remains expensive, though prices may have softened slightly with the 50-series launch. Its 24GB buffer is now the second-largest in the consumer class, which acts as a hard limit for the very largest models.

NVIDIA RTX 5090

- Pros: A powerhouse with 24GB VRAM—excellent for quantized 30B LLMs, 70B LLMs (aggressive quantization), and 1080P AI video with Wan2.2 Enhanced and SVD-XT (with minor optimization). Offers 1,008 GB/s bandwidth, with token generation speeds of 40-50 tokens/sec for 13B LLMs. More cost-effective than the RTX 5090 for users who don't need the extra 8GB VRAM. Prices have softened slightly with the 50-series launch. Fully compatible with all variants of Wan2.2 and Stable Video Diffusion.

- Cons: Significantly more expensive than the 4090. Requires a high-wattage PSU and massive case clearance. While 32GB is an upgrade, it is still a limit for running massive commercial-grade models (e.g., Llama-3-405B) locally without quantization.

Quick Compare: RTX 4090 vs RTX 5090

| Spec | RTX 4090 | RTX 5090 | Improvement | Impact on AI Models |

|---|---|---|---|---|

| Architecture | Ada Lovelace | Blackwell (5nm) | Next Gen | Run 30B+ LLMs natively; handle high-res AI video without compromises. |

| VRAM | 24GB GDDR6X | 32GB GDDR7 | +33% | Run 30B+ LLMs natively; handle high-res AI vide without compromises. |

| Bandwidth | 1,008 GB/s | 1,792 GB/s | +78% | Faster token generation and AI video rendering (critical for SVD-XT's 25-frame output). |

| CUDA Cores | 16,384 | 21,760 | +33% | Major boost in raw computational power for complex AI tasks (LLMs + SVD-XT high-res generation). |

| AI Compute (FP4) | Not Supported | 3,352 TOPS | New Feature | Increases large model inference and video rendering (Wan2.2, SVD-XT) speed by 45%+. |

| TGP (Power) | 450W | 575W | +28% | Requires a high-wattage, robust power supply unit (PSU). |

The RTX 4090 is still far from obsolete, but the RTX 5090 represents a clear performance upgrade. If you already own an RTX 4090 and don't require the absolute maximum performance for your use case, it remains an excellent choice. However, if your work frequently involves running large llm or visual models and demands high-speed responsiveness, then upgrading to the RTX 5090 is a worthwhile consideration.

2 Professional and High-End Uses

These GPUs are for enterprise, research, or power users who need to run massive LLMs (70B+ unquantized) or generate high-end AI video (4K, complex scenes).

NVIDIA RTX 6000 Ada Generation

- Pros: 48GB VRAM—essential for training/fine-tuning LLMs, running 70B+ unquantized LLMs, and 4K AI video generation models. Supports enterprise workflows and advanced AI features. Reliable for 24/7 use, ideal for batch video generation.

- Cons: Very high cost, designed for workstations, not typical desktops.

NVIDIA A100

- Pros: Proven enterprise reliability with up to 80GB VRAM—handles enormous LLMs, datasets, and high clarity AI video. Ideal for data centers and production inference servers running Wan2.2 and SVD at scale.

- Cons: Extremely expensive ($10,000+), designed for data centers (not local desktops). Overkill for 99% of users.

3 Budget-Conscious GPU Picks

Not everyone can accept flagship GPU prices, and thankfully, with the fast updates of GPUs, the used market offers exceptional opportunities for budget-conscious LLM and entry-level video models enthusiasts. For AI model work, the key is that older GPUs with large VRAM pools often outperform newer GPU models with less memory.

NVIDIA RTX 3090 24GB

- Pros: Best value for budget users—$700-900 used, with 24GB VRAM (matching the RTX 4090) and 70-80% of its performance. Runs 30B LLMs comfortably (4-bit quantized) and 480P-720P AI video with Wan2.2 Enhanced and SVD Basic. Undisputed budget champion. Fully compatible with Wan2.2's all variants and SVD Basic.

- Cons: Used market availability is inconsistent; no warranty in most cases. Older architecture, so SVD-XT rendering is slower than newer cards.

RTX 4070 Ti Super 16 GB

- Pros: Practical sweet spot for 7B–13B LLMs (8-bit quantized) and light AI video (480P-720P short clips) with Wan2.2 Enhanced and SVD Basic. Excellent perf-per-watt, quiet, and efficient for office environments.

- Cons: More expensive than used RTX 3090; 16GB VRAM is insufficient for 30B LLMs or SVD-XT.

RTX 4090 vs 4080 vs 4070 Ti

| Comparison Dimensions | RTX 4090 | RTX 4080 | RTX 4070 Ti |

|---|---|---|---|

| Hardware Parameters | CUDA Cores: 16,384 Tensor Cores: 512 VRAM: 24GB GDDR6X; VRAM Bandwidth: 1008 GB/s FP16/BF16: 166 TFLOPS |

CUDA Cores: 9,728 Tensor Cores: 320 VRAM: 16GB GDDR6X; VRAM Bandwidth: 717 GB/s FP16/BF16: 90 TFLOPS |

Next GenCUDA Cores: 7,680 Tensor Cores: 240 VRAM: 12GB GDDR6X; VRAM Bandwidth: 504 GB/s FP16/BF16: 68.2 TFLOPS |

| LLM Performance | Max Quantized Model: 70B Inference Speed (Llama 2 7B, 8-bit): 120-150 tokens/sec Fine-Tuning: 7B/13B (FP16), 34B (8-bit) |

Max Quantized Model: 34B Inference Speed (Llama 2 7B, 8-bit): 80-100 tokens/sec Fine-Tuning: 7B (FP16), 13B (8-bit) |

Max Quantized Model: 13B Inference Speed (Llama 2 7B, 8-bit): 50-70 tokens/sec Fine-Tuning: 7B (8-bit only) |

| Video Generation | Max Resolution: 4K FPS: 15-205s 1080P Latency: 15-25s |

Max Resolution: 2K FPS: 10-155s 1080P Latency: 25s-35s |

Max Resolution: 1080p FPS: 5s-85s 1080P Latency: 35-50s |

| Image Generation | Max Resolution: 2K, 1K Speed: 2-3s/imgBatch (1024): 10+ |

Max Resolution: 1K Speed: 4-6s/imgBatch (1024): 5-8 |

Max Resolution: 768p, 1024p Speed: 8-12s/imgBatch (1024): 2-3 |

| Compatibility | Full support (CUDA 12.x, TensorRT, NIM) | Full support (CUDA 12.x, TensorRT, NIM) | Basic support (needs manual optimization) |

| TDP | 450W | 320W | 285W |

| Reference Price | $1,599 - $1,799 | $1,199 - $1,399 | $799 - $899 |

| Recommend Users | Professional/Teams (High Demand) | Content Creators/Small Teams | Enthusiasts/Beginners (Entry-Level) |

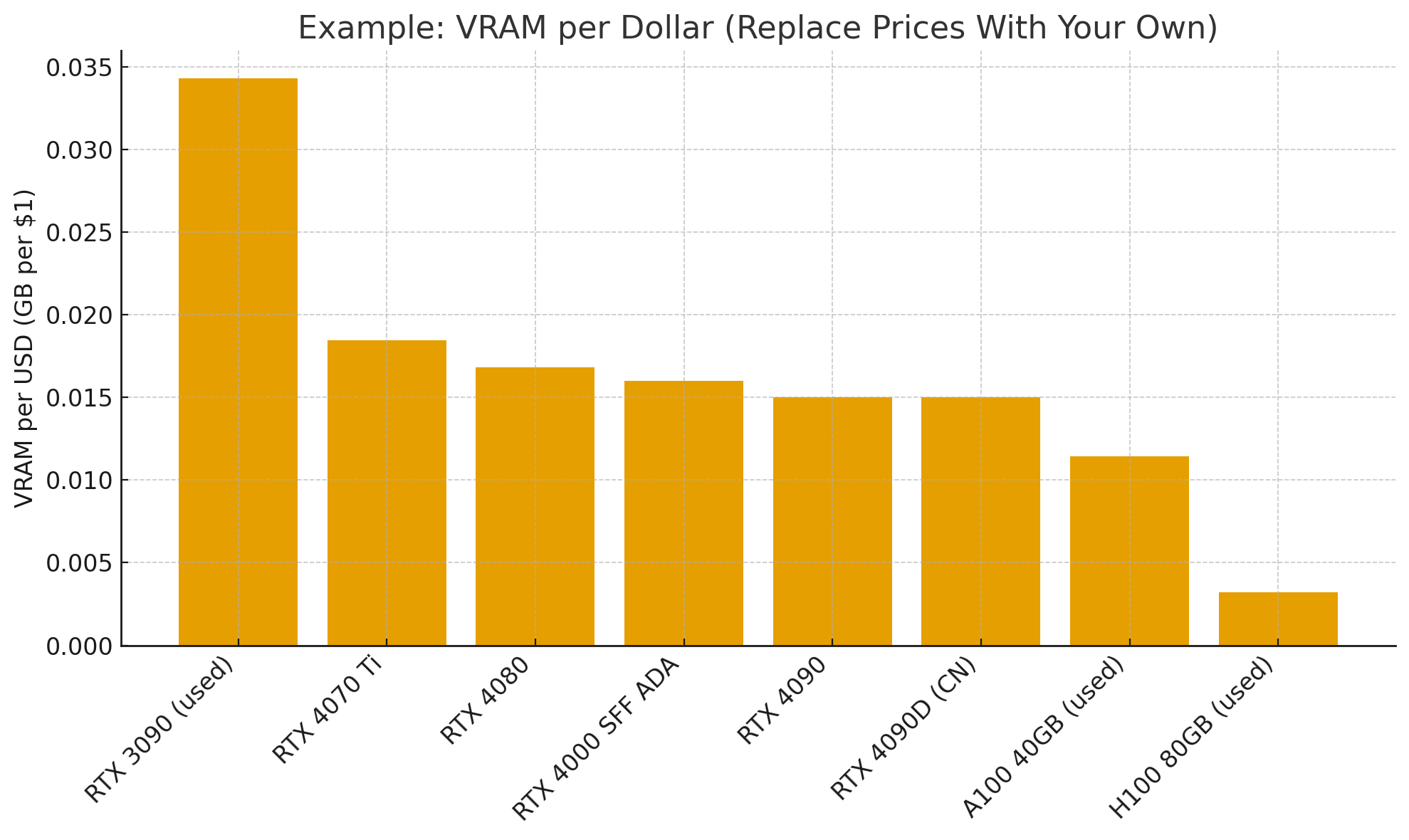

Price-to-Performance Analysis

For AI tasks, focus on VRAM per dollar—gaming benchmarks are irrelevant. A used RTX 3090 (24GB, $700-900) is better value than a new RTX 4070 Ti (12GB, similar price) for AI, as it supports more variants of Wan2.2 and Stable Video Diffusion. When evaluating GPUs, start with a simple calculation: VRAM capacity divided by price.

When evaluating GPUs for AI work, traditional gaming benchmarks become irrelevant. Instead, focus on VRAM per dollar—a metric that reveals surprising value propositions. A used RTX 3090 with 24GB of VRAM at $700-900 offers better value than a new RTX 4070 Ti with 12GB at similar prices, despite the older architecture. For AI work, VRAM capacity trumps architectural improvements in most cases.

Professional Cards vs Consumer Cards

Professional cards (A100/H100) offer undeniable advantages: massive VRAM pools (40–80GB), NVLink for true multi-GPU scaling, ECC memory for production reliability, and data center-grade cooling solutions. These features matter for production inference servers handling thousands of requests or research institutions training custom models.

However, the downsides are equally significant. Prices start at $10,000+, power requirements often exceed standard PSUs, cooling solutions require a server chassis, and the complexity is too great for typical local deployments. Unless you are building production infrastructure or have specific enterprise requirements, these cards represent significant overkill.

Consumer RTX cards are the pragmatic choice for 99% of local LLM & video models users. They fit standard desktop cases, work with regular power supplies, run quietly enough for office environments, and cost a fraction of professional cards.

Matching AI Model to Your GPU: What Can You Run?

Here are some of the best models you can run, categorized by the VRAM on your GPU.

Best LLMs for 8GB VRAM (2026)

| GPU VRAM | Model | Quantization | Why / Notes |

|---|---|---|---|

| 8GB | Mistral 7B | INT4 | Great balance of speed and quality for general use. |

| 8GB | Llama 3.2 7B | INT4 | Smooth on consumer GPUs; strong multilingual support. |

| 8GB | Phi-4 Mini | INT4 | Compact model from Microsoft; excellent for coding tasks than Phi-3. |

| 8GB | Gemma 7B | INT4 | Efficient Google model optimized for modest hardware. |

Best LLMs for 12GB VRAM (2026)

| GPU VRAM | Model | Quantization | Why / Notes |

|---|---|---|---|

| 12GB | Llama 3.1 13B | INT4 | Top choice for conversation and reasoning on mid-range GPUs. |

| 12GB | CodeLlama 13B | INT4 | Specialized for programming; solid code completion and Q&A. |

| 12GB | Mistral-Nemo 12B | INT4 / INT8 | Fits comfortably with room for context; good generalist. |

| 12GB | Yi-1.5 9B | INT8 | Strong long-context performance with stable 8-bit runs. |

Best LLMs for RTX 4090 (24GB VRAM)

| GPU VRAM | Model | Quantization | Why / Notes |

|---|---|---|---|

| 24GB (RTX 4090) | Llama 3.1 70B | INT4 | Flagship local performance for complex reasoning and depth. |

| 24GB (RTX 4090) | Mixtral 8x7B | INT4 (MoE) | Mixture-of-Experts with GPT-4-class results on many tasks. |

| 24GB (RTX 4090) | DeepSeek Coder 33B | INT4 | Superior for software development, debugging, and code synthesis. |

| 24GB (RTX 4090) | Qwen 2.5 32B | INT4 | Excellent multilingual and mathematical capabilities. |

Best Image Models for RTX 4090 (24GB VRAM)

| GPU VRAM | Model | Quantization | Why / Notes |

|---|---|---|---|

| 24GB (RTX 4090) | Z-Image-Turbo | FP16 | Suitable for professional illustration and product design. |

| 24GB (RTX 4090) | Stable Diffusion 4.0 Ultra | FP16 | Ideal for professional creators (photographers, designers) needing high-quality outputs. |

| 24GB (RTX 4090) | FLUX.1-Krea-dev | FP16 | Perfect for professional workflows. |

| 24GB (RTX 4090) | Adobe Firefly Local Pro | FP16 | Adobe's local professional model, accurate color reproduction and industry-standard output. |

Best Video Models for RTX 4090 (24GB VRAM)

| GPU VRAM | Model | Quantization | Why / Notes |

|---|---|---|---|

| 24GB (RTX 4090) | Wan2.2 TI2V-5B | FP16 | Supports text-to-video and image-to-video, perfect for movie-level short creation. |

| 24GB (RTX 4090) | HunyuanVideo-1.5 | FP16 | Supports diverse scene generation with stable motion. |

| 24GB (RTX 4090) | Stable Video Diffusion 3 Ultra | FP16 | Ideal for professional video creators (advertisements, short films). |

| 24GB (RTX 4090) | Wan2.2 I2V-A14B | FP16 | Perfect for animators and visual content creators. |

NVIDIA GPU Alternatives: More Options and Apple Silicon

While NVIDIA offers the smoothest experience, other options exist for those willing to experiment or who have different priorities.

1 AMD GPUs: High VRAM, More Tinkering

AMD's flagship RX 7900 XTX offers strong hardware specifications—24GB of VRAM and ~960 GB/s bandwidth—often matching the RTX 3090/4090 at a lower price. However, its main drawback lies in software support. While ROCm, AMD's compute platform and CUDA alternative, has improved significantly, it still lags behind in framework compatibility and ease of use. It often requires manual configuration and lacks out-of-the-box support for many tools.

For users comfortable with Linux and troubleshooting, it can be a viable option, but Windows users are generally better served by NVIDIA.

2 Apple Silicon: A Unified Memory Powerhouse

Apple Silicon (M1 ~ M4 series) introduces a unique unified memory architecture where system RAM and VRAM are shared. Current Mac Studio models with an M3 Ultra support up to 512GB of unified memory and over 800GB/s bandwidth, enabling very large language models to run entirely in memory. On price, a base M3 Ultra Mac Studio ($3,999) with 96GB of unified memory costs less than a single high-end professional GPU.

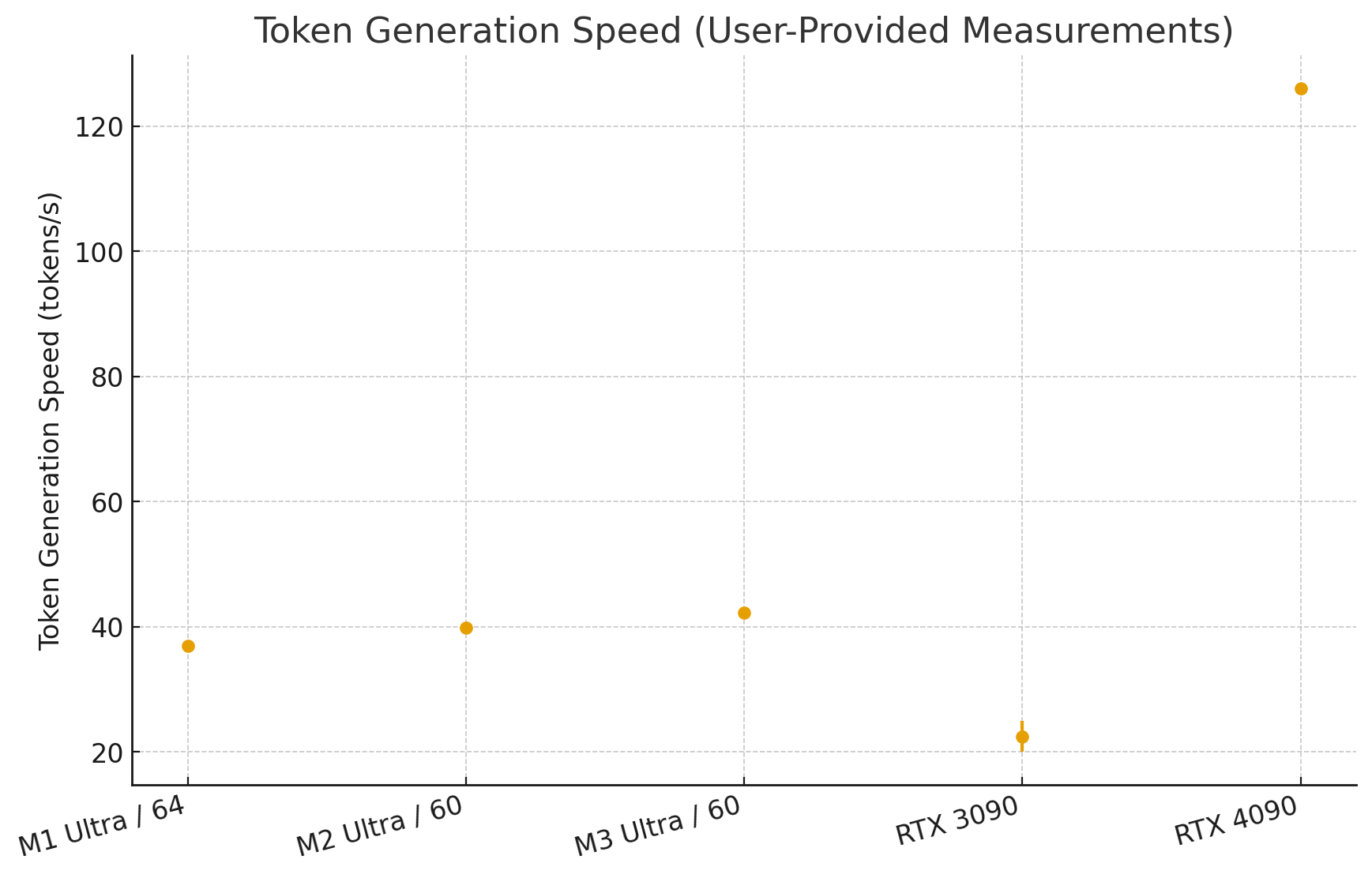

However, token generation speeds lag behind dedicated GPUs—expect 5-15 tokens per second versus 30-50 on an RTX 4090. Apple Silicon excels for experimentation with large models and development work where response speed isn't critical. For production deployments requiring fast inference, dedicated GPUs remain superior.

3 The Experimental Zone: Intel and Regional Players

Intel Arc GPUs represent an interesting wildcard. The A770 (16GB VRAM) offers substantial memory at competitive prices, but software support remains embryonic. Intel's commitment to AI acceleration is clear, but the ecosystem needs time to mature. Consider Intel Arc only for experimentation, not production use.

Regional alternatives like Huawei GPUs face availability challenges outside their home markets. While technically capable and attracting a high level of attention and discussion, obtaining hardware and accessing documentation presents significant barriers for most users.

Frequently Asked Questions (FAQ)

1 What's the Minimum GPU for Running Local Models?

For local LLMs and video generation, 8GB VRAM is the absolute minimum. This allows you to run 7B parameter models with 4-bit quantization, providing ChatGPT-3.5-like capabilities. 12-16GB is the sweet spot for flexibility and complex tasks. For professional, high-res workflows, 24GB+ is essential to avoid bottlenecks. Anything under 8GB is severely limiting.

2 Which graphics cards create AI videos the fastest?

NVIDIA's RTX 4090 generally outperforms most in AI video creation due to its large core count and advanced Tensor Cores. The RTX 3090 is also very capable but lags slightly behind the 4090 in newer AI - related tasks. AMD's Radeon RX 7900 XTX offers good competition, especially in tasks that are well - optimized for its architecture, but may not match NVIDIA in some AI - specific benchmarks.

3 Do I need a graphics card with lots of memory (VRAM) to run AI models?

Yes, VRAM is often the most critical factor. The NVIDIA RTX 3090 and RTX 4090 come with 24GB of VRAM, while AMDs Radeon Pro W6800X Duo offers 32GB, making them excellent choices for diverse AI workloads. High VRAM is essential for LLMs to load larger, "smarter" models with longer context windows. For image generation, it allows for higher resolutions and fine-tuning, while for video models, it handles the complex temporal data required to synthesize coherent frames.

4 NVIDIA vs. AMD: Which brand works better for AI software?

NVIDIA is a leading brand. Their GPUs, especially in the RTX series, are optimized with features like Tensor Cores for AI - related video generation. AMD also offers GPUs like the Radeon RX 7000 series that are designed to handle video - related workloads, with features such as high - speed memory interfaces and large numbers of processing cores.

Conclusion

The NVIDIA RTX 5090 stands as the top choice for users who demand peak performance and maximum future-proofing for both local AI workloads. The RTX 4090 remains an exceptionally capable, modern high-end GPU for heavy inference tasks. The used RTX 3090 delivers unbeatable value, thanks to its critical 24GB of VRAM at a much more accessible price.

Was this page helpful?

![Best GPU for LLMs & AI Video Generation[2026]: Hardware Guide for Creators](https://images.noviai.ai/noviaien/assets/article/video-tips/best_gpu_for_ai_video_generation.png)

Reddit

Reddit Discord

Discord

![Best Horror Animes to Watch[2026]: Top 20 Supernatural Picks](https://images.noviai.ai/noviaien/assets/article/video-tips/best-horror-anime.png)

![How to Make an AI Dog Podcast[2026]: Step by Step Guide with Novi AI](https://images.noviai.ai/noviaien/assets/article/video-tips/How_to_Make_An_AI_Dog_Podcast.webp)

![How to Make Animated Horror Stories: 2 Ways Introduced[2026 Guide]](https://images.noviai.ai/noviaien/assets/article/story-tips/animed_horror_stories.png)

![6 Best AI Video Generators Free No Sign Up[2026 Guide]](https://images.noviai.ai/noviaien/assets/article/video-tips/ai-video-generator-free-no-sign-up.png)