As we navigate 2026, the question is no longer if AI can create animation, but rather how we manage the industrial-scale shift it has triggered.

As someone who has watched these models evolve from flickering pixels in 2023 to the cinematic-grade outputs of today, I want to provide a definitive technical and strategic outlook on the state of AI-generated anime.

At the center of this evolution is the industry's most pressing question: Can AI make a full anime episode? To answer this, we must look beyond the 15-second viral clips and analyze the modular, AI-native pipelines that are currently redefining the 22-minute broadcast standard.

Can AI Make a Full Anime Episode?

Yes. AI can now produce a full 22-minute anime episode, but the "how" has changed significantly, and the distinction matters enormously.

Here's the caveat no one puts in the headline: you won't get there with a single prompt on a single platform.

We have moved past the "one-click generation" myth. Early enthusiasm around tools like Sora and Pika led many creators to imagine a future where you typed a premise and a finished episode materialized.

That future hasn't fully arrived—and understanding exactly where it breaks down is the most useful thing I can tell you.

- The "Drift" Problem: The Decay of Long-Form Logic

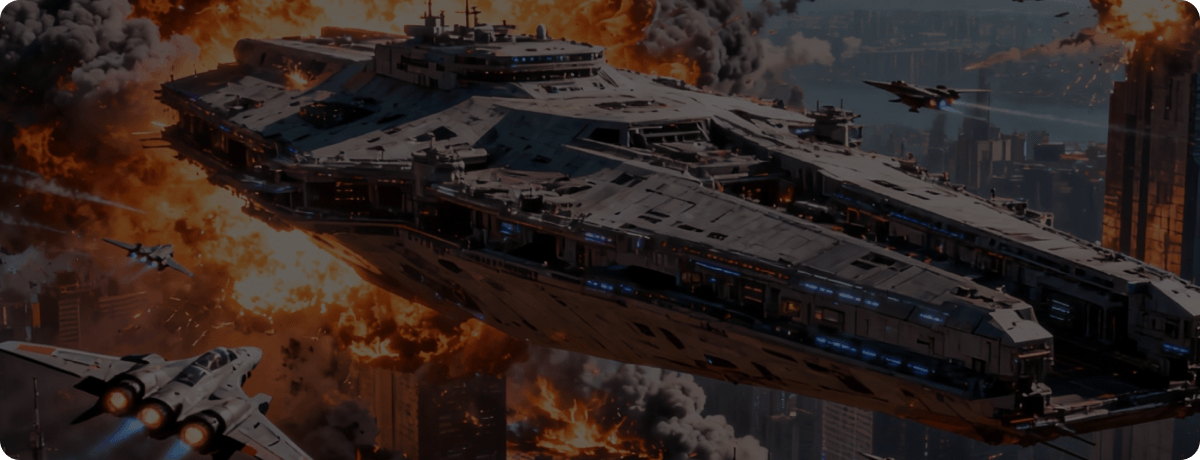

The most significant technical failure in one-click systems is Temporal Drift. in one shot, your protagonist is wearing a crisp blue jacket; three seconds later, the jacket has vanished into the ether. That's because AI video models (like early Sora or even 2026 iterations) function by predicting the "next likely frame." Over a 22-minute span, these micro-predictions accumulate tiny errors. - The Physics Hallucination: Lack of Common Sense

AI models do not understand the laws of physics; they only understand the appearance of pixels. In complex anime scenes—particularly high-octane fight sequences—the AI often fails at Spatial Reasoning. - Narrative Coherence vs. Visual Pacing

You prompt a "sad conversation." The AI might generate a character crying hysterically because that is the most "statistically likely" visual for sadness. AI often struggles with subtext—the quiet moments where a character’s subtle eye movement conveys more than a line of dialogue.

These aren't death knells for AI-generated anime. They're engineering problems, and engineering problems get solved. The 2026 suite from Runway makes a compelling case for how fast that gap is closing.

Gen-4 tackles the drift problem directly: by anchoring generation to reference images, it maintains character appearance, location continuity, and object consistency across scenes in a way that earlier models simply couldn't sustain.

Then there's Aleph, Runway's video-to-video editing model, which applies both local and global transformations to existing footage: adjust a single character's lighting without touching the background, pivot the camera angle on a completed shot, or restructure an environment without regenerating the scene from scratch.

Together, they represent a genuine shift from "AI as generator" to "AI as production partner."

The horizon is closer than it looks.

How Does AI Animation Work in 2026?

Understanding what AI can do for animation requires separating the engine from the output, because the gap between the two is where most creators get lost.

At the technical foundation sits a class of models called latent diffusion models: systems trained to reverse a noise-addition process, transforming pure static into coherent imagery frame by frame.

The best 2026 models layer on top of this a Transformer-based Diffusion architecture (DiT), which captures spatial and temporal relationships across an entire sequence simultaneously—the breakthrough responsible for why characters no longer subtly reshape themselves mid-scene.

Latent space compression completes the picture, compressing video into a representation up to 64 times smaller before running the diffusion process, making long-form generation computationally viable.

1 For Anime Specifically

AI now handles the full production stack with varying degrees of autonomy.

- Script generation and story structure are essentially solved. Models like ChatGPT 5 and Gemini 3 can construct episodic narratives with tonal consistency, B-plots, and character arcs from a brief premise.

- Storyboarding, historically a weeks-long process requiring skilled artists, can be compressed into hours via AI storyboard generators that convert dialogue beats into annotated visual drafts.

- Character design, arguably the most style-sensitive task in anime production—can be automated through character generators that maintain visual identity across scenes, with platforms like Kling 2.6 offering face-lock features that anchor a protagonist's appearance across every shot.

2 For Cartoons and 2D Animation

The gains are equally structural.

- In-betweening is now largely automated.

- Background art generation compresses to days rather than months.

- Voice synchronization and lip-sync are handled natively by platforms like Pika 2.5, eliminating a post-production bottleneck that formerly required specialized software.

- Post-production is not immune to the transformation. AI video enhancers refine motion, balance lighting, and improve visual fidelity after the initial generation pass, meaning even rough-output scenes can be polished without a full re-render.

The practical upshot: what traditionally required a team of four to six specialists over eight to twelve weeks now routinely compresses to one creator, forty-eight to seventy-two hours, and a fraction of the budget.

One caveat worth naming clearly: no single model handles every animation requirement with equal competence. The professional approach treats these models as a rotating ensemble rather than a universal solution, assigning each scene to the tool best suited for its specific demands.

The Creator's Playbook: How to Create Insanely Good AI Animation

In my observation, the creators producing genuinely compelling AI anime in 2026 share one trait: they treat the tools as a creative conversation, not a vending machine. The output quality gap between a casual user and an intentional director using the same tool is staggering—and it comes down almost entirely to process.

1The Professional Workflow

The pipeline that consistently delivers broadcast-quality results follows eight stages, whether you're producing a 90-second short or a full anime episode.

Here’s the complete workflow shared by experienced Novi AI users for creating animations with AI.

Step 1 Script & Beat Sheet

Before touching a single AI tool, write the story. Not just a premise, but a structured beat sheet that maps the emotional arc, scene transitions, and character moments.

AI is extraordinarily good at expanding this into full dialogue and scene descriptions, but it cannot manufacture the underlying narrative logic. That's yours to provide.

Step 2 Shot List & Continuity Bible

Document every character's visual identity in a dedicated reference file: hair color, clothing, distinguishing features, recurring props.

This "continuity bible" becomes the anchor for every generation pass. Skip it, and you'll spend three times the time firefighting character drift.

Step 3 Storyboard as Keyframes

Generate one static keyframe per shot using an image model. This is your animatic layer. It lets you validate timing, composition, and narrative pacing before committing any credits to motion generation. Fail fast here, not in the video generation stage.

Step 4 Animatic (Scratch Timing)

Assemble your keyframes into a timed sequence with scratch audio. This step is routinely skipped by beginners and almost never skipped by professionals. It costs nothing and saves everything.

Step 5 Asset Pack Build

For each character: a neutral full-body master, a set of five to eight emotional expressions, key action poses, and recurring background plates. This pack is your production's source of truth. Change it only deliberately.

Step 6 Shot Production

Generate AI video clips (typically two to six seconds per shot for maximum stability), select best takes, apply surgical edits. Keep camera movement minimal, reserve dramatic moves for one or two hero moments per episode.

Step 7 Edit, Sound & Polish

Stitch, color-grade across shots for consistency, apply deflicker passes where needed, add music and voice. AI handles lip-sync natively on most modern platforms.

Step 8 Delivery

Export masters in ProRes 422 or high-bitrate H.264, plus social verticals. Archive your storyboard PDF, prompts, and reference pack. Future episodes become dramatically faster.

2 Top-Tier AI Animation Tools in 2026

| Model | Best For | Max Length | Standout Feature | Rating |

|---|---|---|---|---|

| Runway Gen-4.5 | Professional Editing | 60s (Chained) | #1 Rated Benchmark: 1,247 Elo score for physical accuracy. |     |

| Kling 3.0 | Long-Form Motion | Up to 2 min | Swiss Army Knife: Only model with native 4K at 60fps and multi-shot storyboarding. |      |

| Google Veo 3.1 | 4K Production | 60s (Chained) | Spatial Audio: 3D soundscapes that match on-screen movement (e.g., cars passing). |     |

| Sora 2 | Cinematic Realism | 25s | Physics Simulation: Best-in-class cinematic color grading and complex scene understanding. |     |

| Seedance 2.0 | Storytelling | 15–20s | Multi-Modal Input: Can process 12 reference files (image/video/audio) at once for perfect consistency. |     |

| Pika 2.5 | Social Media | 5–10s | Creative Effects: Includes "Pikaffects" (Melt, Explode) and 42-second rapid renders. |    |

Access All Top Models with Novi AI

- Access the latest versions of Runway, Kling, Veo, Sora, and more in one place with Novi AI. Skip switching tools, create faster, and bring your ideas to life with less effort and more control.

- Text-to-video and image-to-video generation in a single workflow.

- Create animated AI love stories with a storyboard and scene prompts.

3 Pro Tips for "Insane" Quality

The difference between competent AI animation and genuinely impressive AI animation comes down to a handful of disciplines that most creators ignore.

- Lock one variable at a time. When generating across scenes, keep outfit, location, and lighting constant and change only what the story requires. Every additional variable introduced is a new opportunity for inconsistency.

- Generate keyframes explicitly. Rather than asking AI to animate freely between a start and end state, define anchor poses. Let motion happen between controlled points, not across undefined space.

- Animate the subject, not the background. Complex, dynamic backgrounds compound temporal instability. Keep environments simple and let character performance carry the scene.

- Shorten clip duration to reduce drift. Two-to-four-second clips maintain significantly better visual stability than eight-to-ten-second clips. Cut more frequently, generate shorter takes.

- Build your animatic before committing to motion generation. Industry analysis found that audience dropout rates hit 92% for content with inconsistent characters. That number drops dramatically when creators validate their visual continuity at the animatic stage — before a single credit is spent on video generation.

- Use reference images as anchors, not afterthoughts. The most consistent results come from image-to-video generation, not text-to-video. Generate your keyframes first, then animate from them. This gives the model a visual contract to work from, rather than a verbal description to interpret.

Case Study: Novi AI and the Democratization of Storytelling

The landscape of 2026 is becoming a crowded theater of "solutions," with a new breed of AI tools fighting to define the future of animation. Novi AI has carved out its own corner here.

At its foundation, Novi AI is an all-in-one AI video creation studio. The core workflow is deceptively simple: input a written story, script, or idea—up to 4,000 characters—and the platform generates a fully animated, multi-scene video complete with voiceover, subtitles, background music, and synchronized visual sequences.

What elevates it beyond a generic text-to-video tool is the architecture underneath.

Pillar 1The 10-Model Agile Stack

Novi AI doesn't commit to a single model; it utilizes an intelligent routing system across 10 frontier engines (including Sora 2, Veo 3.1, and Runway Gen-4). By matching specific shots to the model best suited for physics, motion, or style, it ensures the highest technical output for every frame.

Pillar 2Native Aesthetic DNA

These are not post-production filters. Novi AI’s styles—from Ghibli-esque painterly tones to Pixar-grade 3D—dictate the AI’s generation behavior from frame one. This ensures deep stylistic integrity that makes the difference between "AI-generated" and "artistically directed."

Pillar 3Surgical Storyboard Control

The platform replaces "black box" generation with a fully editable storyboard. Directors can adjust timestamped shots, rewrite individual prompts, and use image-to-image repainting for surgical visual corrections. It is the first tool designed to support a director's intent, not just a generator's output.

Pillar 4Asset Heritage & Production Continuity

Serialized storytelling requires consistency. Novi AI’s material library records every character and background plate for instant reuse in future episodes. Combined with a continuity system that aligns voiceovers and subtitles across 60+ languages, it eliminates the "drift" that plagues lower-tier tools.

Will AI Replace Animators?

Bluntly: it already has, for certain roles. And it hasn't, for others. Both statements are true simultaneously.

A 2025 report from Luminate, one of the most rigorously compiled industry analyses of its kind, identified in-betweeners and background artists as the roles most acutely at risk.

These are the high-volume, technically repetitive tasks that AI handles with ease. Studios running AI-assisted pipelines have demonstrably reduced headcount in these areas while maintaining or increasing output volume.

But evidence suggests a more nuanced trend at the senior creative level.

Khalid Latif, Global Executive Creative Director at VML Health, described AI to LBB Online as "a weird creative partner"—not a replacement, but something that now sits at the center of the creative process rather than its periphery.

Mat Delorenzi, a seasoned executive producer, noted that storyboarding, rough animation mockups, and style frame samples are all now handled in-house at a fraction of their former cost—but that emotional nuance in character performance remains irreducibly human.

The most honest framing I've encountered: AI won't replace animators, but it will replace those who refuse to adapt. The survival skills are specific—artistic taste, foundational knowledge of weight and timing, the capacity to art-direct AI output rather than simply accept it. Junior and mid-level professionals entering the field now face an expectation to arrive as a one-person creative studio, fluent across tools that didn't exist eighteen months ago.

The 3D animation market is projected to grow from $12.15 billion in 2020 to $35.38 billion by 2027. The industry is not contracting. Its labor topology is reshaping.

FAQs

1 What is AI animation?

AI animation is the process of using artificial intelligence to generate, automate, or enhance animated content—from individual frames to full video sequences—without requiring traditional drawing or animation skills.

Modern AI animation tools can create characters, scenes, motion, voiceovers, and lip-sync from a simple text prompt or uploaded image.

2 Which AI animation tool is best for beginners?

Novi AI is one of the most beginner-accessible options available in 2026. Input a story, choose a visual style (Anime, Ghibli, Pixar, and more), and the platform generates a fully animated video with voiceover, subtitles, and music in minutes. No editing experience required.

Try It Online3 Can I create an animated video with an AI voiceover?

Yes. Platforms like Novi AI offer 800+ AI-generated voice profiles across 60+ languages, with automatic lip-sync built into the generation pipeline. Magiclight and Kling also include native voiceover and audio-sync features.

You don't need external recording equipment or a separate audio workflow. The voiceover is generated alongside the animation in a single pass.

4 How can I use AI to animate an image?

Upload your image to an image-to-video tool—Kling 3.0, Runway Gen-4.5, Pika 2.5, or Novi AI all support this workflow. Add a motion prompt describing how the scene should move (e.g., "character turns and looks left, wind blows through hair"), select your output duration, and generate.

5 How can I make anime characters sing using AI?

The standard workflow has three steps:

- (1) Generate the audio — use an AI music tool like Suno or ACE-Step to create the song, including vocals in the character's intended voice;

- (2) Apply lip-sync — upload both the character image and the audio track to a lip-sync tool like Runway Act-2, Pika 2.5, or a dedicated service like LipSync.video, which maps vocal phonemes to mouth movements frame by frame;

- (3) Add motion — optionally run the lip-synced output through an image-to-video model to add body movement and scene dynamics.

The full pipeline can be completed on a single platform like Novi AI, or assembled across specialized tools for higher fidelity.

Conclusion: The Era of the Solo Studio

Stephan Bugaj, an Innovation Emmy winner and Pixar veteran, released The Seeker in December 2025: a commercially distributed sci-fi short film produced almost entirely with generative AI, at a fraction of conventional production cost, reaching global streaming platforms. It was made by one person with industry expertise, creative direction, and the right tools.

That moment tells us something important about where we are.

The era of the solo studio is not a future scenario. It is a current reality, accessible to anyone willing to develop the craft of directing AI rather than merely operating it. A 22-minute anime episode can be produced today by a small team—or even a dedicated individual—at a scale and quality that would have been inconceivable in 2022.

What that episode will lack, unless you invest in it consciously, is the irreducible human quality that separates forgettable content from work that resonates. AI accelerates everything except artistic vision. It compresses timelines, reduces costs, and erases barriers. It doesn't supply taste, instinct, or the particular lived experience that makes a story feel true.

In the end, the most important question was never whether AI could make a full anime episode. It always was—and remains—whether you have something worth saying.

Was this page helpful?

Trustpilot Rating 4.8

Trustpilot Rating 4.8

Reddit

Reddit Discord

Discord